the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

Quantifying the impact of Skeptical Science rebuttals in reducing climate misperceptions

John Cook

Bärbel Winkler

Collin J. H. M. Maessen

Timo Lubitz

Doug Bostrom

Dana Nuccitelli

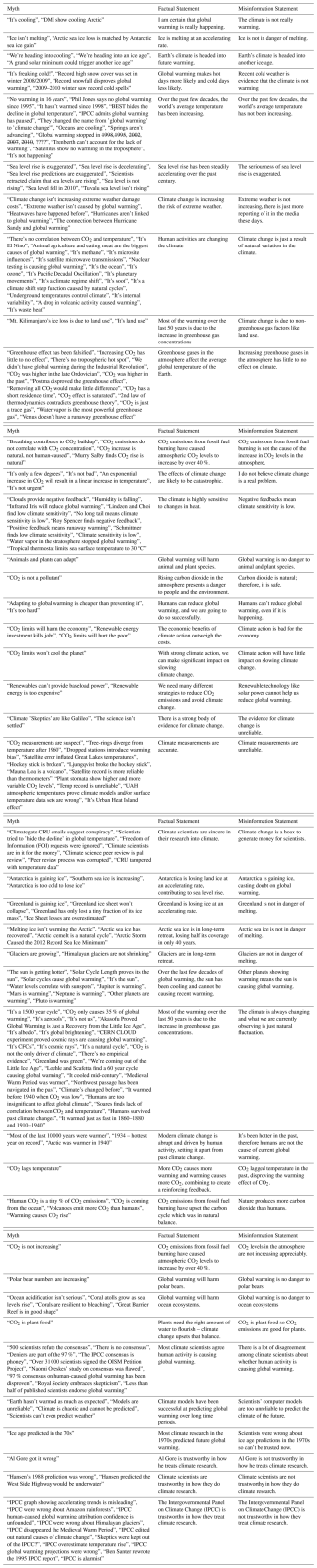

Misinformation about climate change leads to societal damage in a number of ways and consequently, resources are required to support interventions that counter their influence. Aiming to meet this need, Skeptical Science is a highly-visited website featuring 250 rebuttals of misinformation about climate change. The rebuttals are written at three levels – basic, intermediate, and advanced – in order to reach as wide an audience as possible. This study collected survey data from visitors to the website and assessed the effectiveness of rebuttals in reducing acceptance of climate myths and increasing acceptance of climate facts. Our data found that nearly half of the visitors were already highly convinced regarding climate facts. We found that the rebuttals were effective in reducing belief in climate myths, but that some rebuttals show a concerning reduction in belief in climate facts. The greatest improvement occurred with visitors who began with the most inaccurate climate perceptions. This indicates that the website is useful for two main audiences – those who are convinced about climate change but looking for material to support their own climate communication efforts, and those who disagree with climate facts but are open to new information. We examine potential ways that Skeptical Science rebuttals could be updated to improve their performance in raising climate literacy and critical thinking skills.

- Article

(2967 KB) - Full-text XML

- BibTeX

- EndNote

Despite the overwhelming scientific consensus on human-caused climate change (Cook et al., 2013, 2016), there is still public confusion over the severity of climate change and therefore insufficient public demand for climate action. A significant contributor to this lack of progress is climate misinformation, which damages society in a number of ways. The obvious impact of climate misinformation is the instilling of false beliefs or lowering of accurate beliefs, with even just a few misleading statistics reducing people's acceptance of the reality of climate change (Ranney and Clark, 2016). However, climate misinformation has more subtle and subversive impacts beyond simply fostering misperceptions. One subversive effect in the cognitive domain is the tendency for misinformation to cancel out efforts to communicate facts. When people are confronted with conflicting pieces of information (e.g., facts and misinformation) and have no way to resolve the conflict, they tend to disengage and believe neither (McCright et al., 2016; van der Linden et al., 2017; Vraga et al., 2020). This impact is highly consequential for educators, scientists, and communicators, as it means that efforts to communicate facts can be cancelled out by misinformation.

Other subversive effects are seen in the social domain, with climate misinformation polarizing the public, having a disproportionate impact on political conservatives. This means that after being exposed to misinformation, people with different political backgrounds end up further from each other in their climate perceptions (Cook et al., 2017a). Another social effect is on scientists who, when attacked, can be influenced to downplay how they report their scientific results, lest they appear to resemble the stereotypes of biased scientists expressed in attacks on them (Lewandowsky et al., 2015). This chilling effect extends beyond the scientific community, with the general public less likely to talk about climate change with friends and family, largely because of fear of pushback (Geiger and Swim, 2016).

Climate misinformation in the form of conspiracy theories also causes damage spilling beyond the issue of climate change. One study found that when people were exposed to a conspiracy theory about global warming, they were less likely to sign a petition in support of measures to reduce global warming and less likely to donate to a charity (van der Linden, 2015). Conspiracy theories also increase people's feelings of powerlessness, uncertainty, and disillusionment, which reduces their intention to engage in politics more broadly (Jolley and Douglas, 2014). This myriad of negative impacts necessitates the need to develop resources and interventions to counter climate misinformation.

Much psychological research has been conducted into effective ways to refute misinformation. One strategy is to dislodge myths with a “replacement fact” that possesses at least the same explanatory relevance as the myth (Ecker et al., 2010; Seifert, 2002). However, factual information alone may not be enough; when people are presented with both facts and myth countering the fact, the two can cancel each other out (McCright et al., 2016; van der Linden et al., 2017; Vraga et al., 2020). This risk can be mitigated by explaining the misleading rhetorical techniques or logical fallacies used by the misinformation to cast doubt on the facts (Cook et al., 2017a). These disparate strategies have been synthesised in the Debunking Handbook 2020 which suggests that debunkings should adopt a fact-myth-fallacy-fact structure (Lewandowsky et al., 2020). A complementary approach that often incorporates these approaches is misconception-based learning (McCuin et al., 2014) or agnotology-based learning (Bedford and Cook, 2013), which involves teaching scientific facts through directly debunking science misconceptions.

An increasingly important concept in misinformation research is discernment – the ability to distinguish factual information from misinformation. Discernment is commonly measured by taking the difference between agreement with facts and agreement with misinformation (Pennycook et al., 2021). This is important because concerns have been raised that some anti-misinformation interventions have resulted in reducing discernment, not only reducing agreement with misinformation but also reducing agreement with facts (Modirrousta-Galian and Higham, 2023). Anti-misinformation interventions should seek to raise discernment by increasing the gap between fact agreement and misinformation agreement.

1.1 Skeptical Science

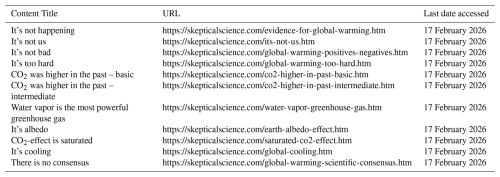

Skeptical Science is an international website and non-profit science education organization founded by John Cook in 2007. The main purpose of the website is to debunk misconceptions and misinformation about human-caused climate change, featuring more than 250 rebuttals of climate myths. The website is maintained by a team of academics and volunteers from around the globe who actively contribute to published research. One highlight of Skeptical Science research output is an often-cited 97 % consensus paper (Cook et al., 2013), which was affirmed by a subsequent synthesis of consensus studies (Cook et al., 2016).

Other researchers have also drawn upon or analysed Skeptical Science's content. For example, one study analysed user comments on skepticalscience.com, finding that one third of posts indicated a desire to communicate facts or educate (Metcalfe, 2020). The website's encyclopedic list of climate myths has also been influential, with Elsasser and Dunlap (2013) drawing upon the 103 listed rebuttals (at the time) in order to identify the prevalence of specific climate myths in newspaper op-eds. A later analysis of climate denial referenced Skeptical Science's 193 rebuttals (at the time), indicating the steady accumulation of debunkings (Hansson, 2017). The taxonomy of myths also served as the starting point in the inductive development of a comprehensive taxonomy of contrarian claims about climate change (Coan et al., 2021). The website content is currently being used to train models that use generative AI to automatically debunk climate misinformation (Zanartu et al., 2024).

The rebuttals are written at three levels, offering basic, intermediate, and advanced versions. They tackle common misconceptions about climate change such as “global warming is not happening”, “It's not caused by human activity”, “Climate impacts are not bad”, and “Climate solutions are too hard”. The rebuttals receive most of the website's traffic, with some individual rebuttals viewed more than 20 000 times per month. They are listed by popularity, fixed numbers (for ease of reference), or taxonomic categories for ease of access.

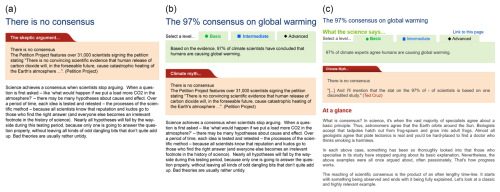

Over time, the design of the rebuttal content has evolved to be brought more in line with debunking best-practices recommended from psychological research. The myth rebuttals initially led with and emphasized the myth that was being debunked (Fig. 1a). Subsequently, the rebuttals were adapted using a format to de-emphasise the myth according to Schwarz et al. (2016) (Fig. 1b).

In late 2022, a thorough rebuttal revision project was initiated, motivated by the years that had passed since some rebuttals had been written and the advances in climate science that had occurred during that time. Given the important role of readability on reading comprehension (Zainurrahman et al., 2024), the rebuttals were made accessible to a wider range of readers with an “at a glance” primer section added to the start of the basic version of selected rebuttals (Fig. 1c).

Figure 1(a) First version of rebuttal, (b) Second version of rebuttal with initial fact and basic/intermediate/advanced levels, (c) Current version with “At a glance” section.

Despite much effort having been put into rebuttal creation and revision over many years, no research had been conducted assessing the effectiveness of the rebuttals in countering climate misinformation. Such an analysis could inform development of future rebuttals and might also help people formulate their own rebuttals in work outside Skeptical Science. Consequently, this study explores the research question: how effective are the Skeptical Science rebuttals in reducing acceptance in climate myths and increasing acceptance of climate facts?

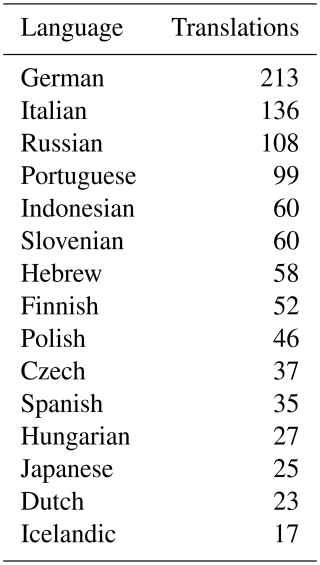

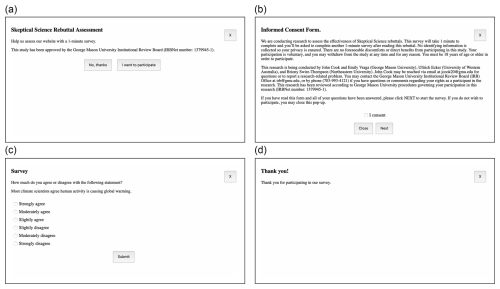

This study collected survey data from a selection of visitors to https://skepticalscience.com (last access: 17 February 2026). Specifically, visitors who arrived directly at a rebuttal having come from https://google.com, https://google.co.uk, or https://google.com.au (last access: 17 February 2026) were invited to participate in research. In other words, users who conducted a search on Google then clicked on a link to a Skeptical Science rebuttal in the organic search results.1 Users who arrived at a non-English rebuttal were excluded from the final analysis as the research was conducted in English. Invited visitors were shown a modal (an industry term for a pop-up box overlaying the webpage) asking if they wanted to participate (Fig. 2a). Visitors who indicated they wanted to participate were shown a consent form informing them about the experiment design and how data would be handled (Fig. 2b).

If users consented, they were shown a single statement about climate change and asked to indicate their level of agreement on a 6-point Likert scale from “Strongly agree” to “Strongly disagree” (Fig. 2c). “Strongly agree” answers were assigned value 1 while “strongly disagree” answers were assigned value 6. Users were randomly shown either a factual or misinformation statement relevant to the rebuttal (all statements listed in Table A1). Answers to factual statements were reverse scored so that higher values equated to more accurate answers. Once they completed this single survey item, participants proceeded to read the rebuttal. If they scrolled to the end of the rebuttal, indicating that they had read the rebuttal, another modal screen was displayed, inviting them to again indicate their level of agreement with the same factual/misinformation statement. Users who failed to scroll to the end of the rebuttal were not shown the second survey question, and were excluded from the research data. After answering the final question, participants were thanked for their participation and could close the survey (Fig. 2d).

Figure 2Screenshot of modals used in experiment design. (a) Invitation to participate in research. (b) Informed consent form detailing research design. (c) Survey question. (d) Final thank you modal.

As well as the answer to the survey question, the user's IP address was recorded so that users whose IP address was already listed among existing research participants were not invited upon any subsequent visits (however, IP addresses were deleted in the anonymised version of the dataset). We also recorded Start Time (when the first survey question was loaded) and End Time (when the end survey was loaded). Time Spent was calculated as the difference between End Time and Start Time, noting that this also included the time spent filling out the pre-rebuttal survey. Data collection occurred from November 2021 to July 2025. Over this period, 858 016 visitors were shown the pop-up invitation to participate in research.

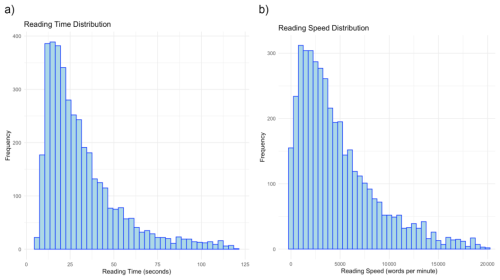

Among the 13 432 people who consented to participate in the research and filled out the pre-rebuttal survey, 6261 people (46 %) went on to fill out the post-rebuttal survey. 3146 participants were shown a factual statement in the survey quiz while 3115 were shown a myth statement – fact or myth was randomly allocated. The average time spent looking at the rebuttals was 4 min, with the median being 1 min, indicating that readers scrolled through the rebuttal quickly (see Fig. A7.1 in the Appendices for a distribution of reading times and speeds). While some participants showed fast reading speeds, they were not excluded from the analysis as they were representative of real-world skimming behaviour and hence offered external validity.

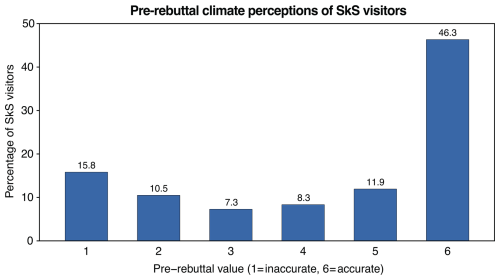

The majority of participants came to the website already convinced about climate change. Figure 3 shows the distribution of pre-rebuttal beliefs, revealing that nearly half of the participants (46.3 %) showed full agreement with the climate fact or full disagreement with the climate myth. In this figure and throughout our results, we refer to the single measure accuracy where strong agreement with the factual statement and strong disagreement with the myth statement are designated the most accurate response. Interestingly, the distribution of users was bi-modal with peaks at the extreme ends of the spectrum, indicating that most visitors had a strong opinion about climate change one way or the other, with a minority of undecided visitors.

Figure 3Distribution of climate perceptions in pre-survey. 1 shows inaccurate answer, 6 shows accurate answer to question shown in Fig. 2c.

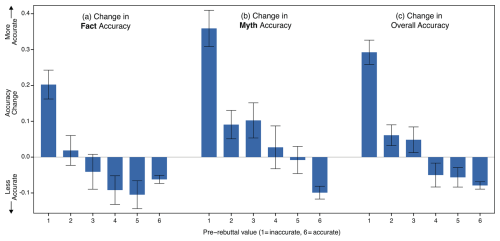

Comparing the pre-rebuttal and post-rebuttal scores (e.g., including participants who were shown fact statements or myth statements), showed that overall there was a small but non-significant improvement in accuracy. This was conducted through a Wilcoxon Signed-Rank Test, using the Common Language Effect Size (CLES) as a measure of effect size, finding a non-significant difference between pre- and post-test scores with a small effect size (p=0.49, CLES = 0.05). To examine the change in perceptions in greater detail, we looked at the response to either factual statements or myth statements separately, shown in Fig. 4a and b. Overall, there was a significant decrease in agreement with factual statements (p=0.006, CLES = 0.05) and a significant decrease in agreement with myth statements (p= 0.001, CLES = 0.08). While overall accuracy improved, the change was non-significant because the decrease in accuracy in response to the factual statements partially canceled out the more accurate response to the myth statements.

Figure 4Change in accuracy among participants at varying pre-rebuttal values (positive value means increase in accuracy). (a) Change in accuracy for participants shown factual statement (e.g., change in agreement with factual statement), (b) Change in accuracy for participants shown myth statement (change in disagreement with misinformation statement), (c) Average change in accuracy for fact and myth statements combined.

The change in accuracy significantly depended on pre-existing accuracy. To explore this, a Spearman's rank-order correlation was performed to determine the relationship between initial belief scores and the magnitude of belief change. There was a significant, strong negative correlation between the two variables, , p<0.001. This suggests that individuals with lower initial scores (e.g., less accurate) experienced greater increases in their accuracy compared to those who started with higher scores. Figure 4c visualizes this dynamic, showing how the improvement in accuracy was greatest for those with the lowest pre-rebuttal accuracy. Among the people who gave an inaccurate value in the pre-rebuttal survey (1–3), 7.2 % switched to an accurate value (4–6) in the post-rebuttal survey.

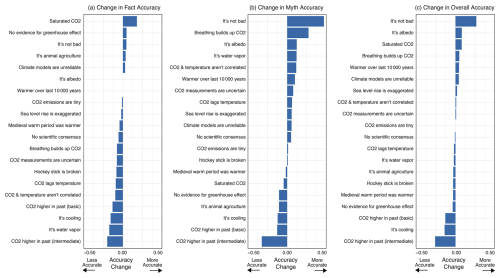

In order to better understand reader response to rebuttals, the change in perception was examined across different individual rebuttals. Figure 5 shows the changes in myth and fact accuracy for 20 rebuttals that recorded at least 50 participants, with positive values representing a shift towards greater accuracy. Consistent with Fig. 4, this shows that myth accuracy has on average a more positive improvement compared to fact accuracy.

Figure 5Change in accuracy with regard to (a) fact, (b) myth perceptions, and (c) myth and fact combined, for the 20 rebuttals with most data (positive values mean increase in accuracy).

Some rebuttals consistently perform well for both fact and myth (e.g. “climate impacts aren't bad”, see Appendix A6 for links) while other rebuttals perform badly for both fact and myth (e.g., basic and intermediate versions of “co2 was higher in past”). In the case of the water vapor rebuttal, the change in myth accuracy is one of the better results while the change in fact perception is the second worst result.

To more closely explore potential explanations for the varied results, the content of the top three and bottom three rebuttals listed in Fig. 5c were qualitatively examined. In particular, the rebuttals were inspected to see whether they possessed a factual explanation that possessed at least the same explanatory relevance as the myth (Ecker et al., 2010) and an explanation of the fallacy that the myth used to distort the facts (Cook et al., 2017a). Overall, the top three rebuttals clearly explained replacement facts, while the bottom three rebuttals failed to clearly explain replacement facts. All but one rebuttal included a fallacy explanation. The top three rebuttals span three categories of climate misinformation casting doubt on the reality, cause, and impacts of global warming. The most effective rebuttal debunked the myth “climate impacts are not bad”, with the next most effective rebuttals countering the myths “climate change is caused by albedo changes” and “greenhouse effect is saturated”.

In the rebuttal to “climate impacts are not bad”, the replacement fact was that the negative impacts of global warming far outweighed the benefits. This fact is clearly and simply communicated, and reinforced repeatedly as the rebuttal compares negative impacts to benefits across different aspects of the climate (e.g., agriculture, health, polar melting, etc). However, the rebuttal fails to explicitly explain the myth's fallacy, which is cherry picking2 benefits of climate change while ignoring negative impacts.

The rebuttal to “climate change is caused by albedo changes” does explain the relevant fact, which is that albedo is a feedback amplifying climate change rather than a forcing driving climate change. However, this fact is not highlighted in the “what the science says” box and could have been made more prominent, which may explain why belief in the fact did not increase from this rebuttal. The fallacy in this myth involves cherry picking short periods in order to find spurious correlations between albedo and temperature trends. While the rebuttal does show the long-term trend data which implicitly exposes this fallacy, it fails to explicitly explain the misleading technique.

For the rebuttal to the myth “greenhouse effect is saturated,” the relevant fact is that more heat is being trapped high up in the atmosphere where the air is thinner (Cook et al., 2015). The rebuttal implicitly alludes to this fact, mentioning the need to consider the greenhouse effect at all levels of the atmosphere, but does not explicitly explain the fact. The rebuttal fails to explain the fallacy of oversimplification, considering the atmosphere as a single layer when it consists of multiple layers (Cook et al., 2018; Flack et al., 2024).

The worst and third-worst performing rebuttals were the basic and intermediate rebuttals of “CO2 was higher in the past”. This myth argues that because CO2 has been much higher in the Earth's deep past (e.g., over ten times current levels during the Ordovician-Silurian period) without the world burning up, this casts doubt on the warming effect of CO2. The relevant fact is that in the Earth's deep past, the sun was cooler when CO2 was higher with the two forcings roughly balancing each other out (Cook et al., 2015). The myth commits single cause fallacy, a form of oversimplification that fails to consider both factors. Both the basic and intermediate debunkings fail to explain either the fact or the fallacy.

The second worst performing rebuttal addressed the myth “it's cooling.” The replacement fact communicated in the “What the Science Says” box simply says “it's warming”, which is essentially just a negation of the myth without producing any substantive details. The factual explanations delve into complicated details regarding ocean cycles and statistical methods without a clear articulation of how these details relate to the key fact. The rebuttal does explain the fallacy of cherry picking committed by this myth, the only rebuttal examined among both the top three and bottom three rebuttals that explicitly explains the fallacy.

Our experimental data shed light on the nature of Skeptical Science visitors with most visitors (66.5 %) already agreeing with climate facts, and 46.3 % of visitors showing strong agreement with the fact or strong disagreement with the myth (Fig. 3). Understanding the reason why these visitors come to the site is beyond the scope of this study (which we address further in the discussion of limitations), but one possible interpretation is that a large proportion of visitors may be coming to the website, not because they were unsure about a particular climate fact or myth, but because they were looking for information to assist them in responding to climate misinformation (again, we clarify that we have collected no data to justify this interpretation). In analysing comment threads on Skeptical Science, Metcalfe (2020) concluded that commenters seeking out like-minded users was an example of “chanting to the choir.” However, a more constructive interpretation is that Skeptical Science content is “teaching the choir to sing,” providing resources that empower people to respond to climate misinformation (Swim et al., 2014). Such a service is particularly important given that a major reason why people self-censor and avoid talking about climate change with friends and family is due to fear of push-back from climate contrarians (Geiger and Swim 2016). This avoidance of climate change as a discussion topic, known as climate silence, is self-reinforcing leading to a “spiral of silence” (Maibach et al., 2016). On the other hand, discussing climate change raises awareness of the issue, which leads to more discussion in a positive feedback loop (Goldberg et al., 2019).

Also conflicting with the “chanting to the choir” interpretation is the finding that the greatest improvement in accurate perceptions was observed among those with the strongest disagreement with climate facts or strongest agreement with climate myths. This was an encouraging result, showing that the website is effective in changing the minds of those most dismissive about climate change. However, a concerning result was that overall, there was a decrease in agreement with climate facts. Inspection of the top three and bottom three rebuttals offers insights into how rebuttals could be made more effective. The better performing rebuttals identified relevant replacement facts that offered equal or greater explanatory relevance than the myths, explained clearly and simply, while the worst performing rebuttals failed to clearly explain replacement facts. In addition, explicit explanations of the fallacies used by climate myths should also be integrated into the rebuttals, offering a seamless fact-myth-fallacy debunking structure (Lewandowsky et al., 2020). Currently, the website is being redesigned with plans to integrate fallacy explanations into the updated content infrastructure and rebuttal design, in line with research showing the effectiveness of fallacy explanations (Cook et al., 2017a). By incorporating existing resources documenting fallacies in climate misinformation (Cook et al., 2015, 2018; Flack et al., 2024), it is expected that this might have a greater impact on lowering agreement with myths than on increasing agreement with facts. Future research should assess the updated effectiveness of rebuttals that are more intentional in including replacement facts and fallacy explanations.

One limitation of our study was the measurement of just one outcome variable: agreement with a fact/myth statement. Future studies should aim to gain deeper insight into the impact of rebuttals on readers. One approach would be to collect open-ended feedback from participants in the post-rebuttal survey. Qualitative data with the user reflecting on the readability or comprehensibility of the rebuttal might offer guidance on potential problems with specific rebuttals. Questions specifically targeting motivations could address more definitively why readers visited Skeptical Science, better informing the website creators to meet readers' needs. Another limitation of this study is that it examined the impact of a single exposure to debunking text, a challenging situation given that the effects of misinformation interventions decay over time (Maertens et al., 2025) while the public are often exposed to multiple cases of misinformation over time. Unfortunately, given the real-world field test aspect of this research, with the corresponding lack of control over participant behaviour, addressing this limitation is beyond the scope of our research capability.

However, controlled laboratory experiments are capable of addressing some of these limitations, such as testing the impact of repeated exposure to corrective messages (Maertens et al., 2025) or offering messages that vary in a more controlled fashion to avoid confounds (e.g., exposing participants to the same debunking with or without the presence of replacement facts and/or fallacy explanations). In particular, controlled laboratory experiments can address the major weakness of field tests that rely on convenience sampling, which is to provide participant samples that represent the general population. Such research design would offer more generalizable findings for science communicators although, in the case of this field test, the biased sample matched the readership of the website so provided external validity for this research.

A key goal of misinformation interventions is to increase reader discernment, the difference between belief in facts and belief in myths (Pennycook et al., 2021). Although there was overall an increase in discernment, with the decrease in agreement with myths greater than the decrease in agreement with facts, the result that belief in climate facts decreased for at least some rebuttals is unwelcome and counter to the goal of Skeptical Science. A recent meta-analysis found that overall, inoculation against misinformation increases discernment between reliable and unreliable news (Simchon et al., 2025).

A purely fact-based approach to debunking misinformation operates under the assumption of the information deficit model, which assumes that public controversy about climate change can be resolved if enough information is supplied to people. This assumption has been criticised as simplistic, resulting in ineffective climate communication (Suldovsky, 2017). Alternative approaches have been proposed, such as relationship-building between scientists and the public (Cook and Overpeck, 2019), participatory models (Pearce et al., 2015), or the CAUSE (Confidence, Awareness, Understanding, Satisfaction, Enactment) model which has a strong emphasis on building credibility and establishing trust with target audiences (Rowan et al., 2021). Inoculation theory – and in particular, logic-based inoculation – offers a psychological framework for reducing the influence of misinformation in a way that overcomes some of the cultural barriers such as political ideology (Cook et al., 2017a). This underscores the importance of incorporating fallacy explanations in rebuttals, and measuring their effectiveness in increasing reader discernment between facts and myths.

Lastly, the rebuttals examined in this study all focused on climate science myths, which has been a particular focus of Skeptical Science to date. However, recent research indicates that climate misinformation is transitioning from science denial to arguments against climate solutions (Coan et al., 2021), with increasing attention being paid to the so-called “discourses of delay” – framings and narratives designed to delay climate action (Lamb et al., 2020). Further, solutions misinformation has been found to be one of the most polarizing forms of climate misinformation, having a disproportionate effect on political conservatives (Lieu et al., 2025). Due to this growing threat, Skeptical Science has recently begun incorporating more rebuttals of solutions myths. A collaboration with The Sabin Center for Climate Change Law at Columbia Law School involved adapting their rebuttals of 33 renewable myths into Skeptical Science rebuttals (Eisenson et al., 2023). However, the effectiveness of rebuttals in response to solutions misinformation is understudied. Experimentally testing the impact of these rebuttals would be a useful area of future research.

In summary, collecting quantitative survey data on a live website is technically and scientifically challenging but offers the opportunity to gain deep insights into pre-existing and updated perceptions of visitors after reading website content. In this study, we obtained insights into climate perceptions of visitors as they arrived at the website. We also learned that our rebuttals decreased belief in climate myths and improved discernment – the difference between belief in facts and myths. However, we also observed a decrease in agreement with climate facts, an unwelcome result necessitating investigation into possible causes. In turn, the subsequent analysis offered guidance on ways in which the rebuttals could be updated to be more effective, by including explanations of “replacement facts” that dislodge the myths being debunked, bringing the rebuttals in line with the recommendations of psychological research.

A1 Handbooks

A1.1 The Debunking Handbook

Skeptical Science also provides downloadable materials such as handbooks devoted to various aspects of misinformation research. The Debunking Handbook is a consensus document written by 19 co-authors invited by the three lead authors Stephan Lewandowsky, John Cook and Ullrich Ecker based on their scientific status in the field. The Handbook explains what mis- and disinformation is, why it can cause substantial harm for individuals and societies, why it is often sticky and therefore hard to dislodge, why pre-bunking can be more effective than debunking and how to go about the latter best. As of July 2025, this handbook has been translated into 20 languages.

A1.2 The Conspiracy Theory Handbook

Conspiracy theories attempt to explain events as the secretive plots of powerful people. While conspiracy theories are not typically supported by evidence, this does not stop them from blossoming. Conspiracy theories damage society in a number of ways. To help minimize these harmful effects, The Conspiracy Theory Handbook (Lewandowsky and Cook, 2020), explains why conspiracy theories are so popular, how to identify the traits of conspiratorial thinking, and what effective response strategies are. As of July 2025, this handbook has been translated into 20 languages. The Handbook distills the most important research findings and expert advice on dealing with conspiracy theories. It also introduces the abbreviation CONSPIR which serves as a mnemonic to more easily remember the seven traits of conspiratorial thinking: They are contradictory, contain overriding suspicion, have nefarious intent, something must be wrong, peddlers of conspiracy theories see themselves as persecuted victims, they are immune to evidence and are re-interpreting randomness.

A2 Massive Open Online Course: Denial101x

In 2015, the Skeptical Science team in collaboration with the University of Queensland produced a Massive Open Online Course (MOOC) titled Denial101x: Making Sense of Climate Science Denial (Cook et al., 2017b; Winkler and Cook, 2021), which ran from April 2015 to February 2024. Included the fact-myth-fallacy resource (published at https://sks.to/fmf, last access: 17 February 2026).

A3 Translations

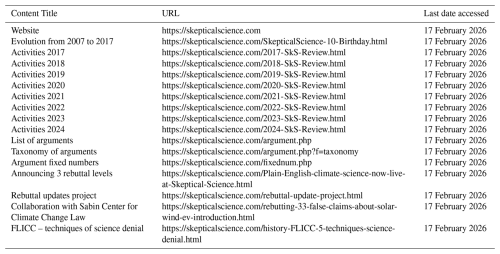

In 2009, translation capabilities for rebuttals were added to the website and since then, 1086 translations have been published in 25 languages by volunteer translators. For some languages there are less than 5 translations while others have up to 213. Table A2 shows the top 15 languages by number of published translations.

A4 Conference presentations

Winkler and Cook (2020, 2021).

A5 Links to Skeptical Science Content and Resources

Anonymised data has been uploaded to https://osf.io/jnce4/ (last access: 17 February 2026).

JC and BW contributed to the data analysis and writing of results. CM, TL, DB contributed descriptions of experimental implementation. DN contributed to the writing of manuscript.

The contact author has declared that none of the authors has any competing interests.

This study was conducted with ethics approval obtained from the George Mason University Institutional Review Board (IRBNet number: 1379945-1).

Publisher's note: Copernicus Publications remains neutral with regard to jurisdictional claims made in the text, published maps, institutional affiliations, or any other geographical representation in this paper. The authors bear the ultimate responsibility for providing appropriate place names. Views expressed in the text are those of the authors and do not necessarily reflect the views of the publisher.

This article is part of the special issue “Climate and ocean education and communication: practice, ethics, and urgency”. It is not associated with a conference.

The Skeptical Science team acknowledges the tireless contributions of John Mason who led the revision of rebuttals and addition of the “At a Glance” sections.

This paper was edited by Mathew Stiller-Reeve and reviewed by Theresia Bilola and two anonymous referees.

Bedford, D. and Cook, J.: Agnotology, scientific consensus, and the teaching and learning of climate change: A response to Legates, Soon and Briggs, Science and Education, 22, 2019–2030, 2013.

Coan, T. G., Boussalis, C., Cook, J., and Nanko, M. O.: Computer-assisted classification of contrarian claims about climate change, Scientific Reports, 11, 22320, https://doi.org/10.1038/s41598-021-01714-4, 2021.

Cook, B. R. and Overpeck, J. T.: Relationship-building between climate scientists and publics as an alternative to information transfer, Wiley Interdisciplinary Reviews: Climate Change, 10, e570, https://doi.org/10.1002/wcc.570, 2019.

Cook, J.: Deconstructing Climate Science Denial, in: Edward Elgar Research Handbook in Communicating Climate Change, edited by: Holmes, D. and Richardson, L. M., Cheltenham, UK, Edward Elgar, 62–78, https://doi.org/10.4337/9781789900408.00014, 2020.

Cook, J., Nuccitelli, D., Green, S. A., Richardson, M., Winkler, B., Painting, R., Way, R., Jacobs, P., and Skuce, A.: Quantifying the consensus on anthropogenic global warming in the scientific literature, Environmental Research Letters, 8, 024024, https://doi.org/10.1088/1748-9326/8/2/024024, 2013.

Cook, J., Schuennemann, K., Nuccitelli, D., Jacobs, P., Cowtan, K., Green, S., Way, R., Richardson, M., Cawley, G., Mandia, S., Skuce, A. and Bedford, D.: Denial101x: Making sense of climate science denial, Youtube [video], https://www.youtube.com/user/denial101x (last access: 17 February 2026), 2015.

Cook, J., Lewandowsky, S., and Ecker, U.: Neutralizing misinformation through inoculation: Exposing misleading argumentation techniques reduces their influence, PLOS ONE, 12, e0175799, https://doi.org/10.1371/journal.pone.0175799, 2017a.

Cook, J., Winkler, B., Finn, C., and Dodgen, T.: Challenges and learning opportunities in a controversial MOOC forum on climate science denial, In 10th International Conference of Education, Research and Innovation (Iceri2017), IATED Academy, 3460–3468, https://doi.org/10.21125/iceri.2017.0947, January 2017b.

Cook, J., Oreskes, N., Doran, P. T., Anderegg, W. R. L., Verheggen, B., Maibach, E. W., Carlton, J. S., Lewandowsky, S., Green, S. A., Skuce, A. G., Nuccitelli, D., Jacobs, P., Richardson, M., Winkler, B., Painting, R., and Rice, K.: Consensus on consensus: a synthesis of consensus estimates on human-caused global warming, Environmental Research Letters, 11, 048002, https://doi.org/10.1088/1748-9326/11/4/048002, 2016.

Cook, J., Ellerton, P., and Kinkead, D.: Deconstructing climate misinformation to identify reasoning errors, Environmental Research Letters, 11, https://doi.org/10.1088/1748-9326/aaa49f, 2018.

Ecker, U. K., Lewandowsky, S., and Tang, D. T.: Explicit warnings reduce but do not eliminate the continued influence of misinformation, Memory and Cognition, 38, 1087–1100, 2010.

Eisenson, M., Elkin, J., Fitch, A., Ard, M., Sittinger, K., and Lavine, S.: Rebutting 33 false claims about solar, wind, and electric vehicles, Sabin Center for Climate Change Law, https://climate.law.columbia.edu/news/sabin-center-releases-new-report-rebutting-33-false-claims-about-solar-wind-and-electric (last access: 17 February 2026), 2023.

Elsasser, S. W. and Dunlap, R. E.: Leading voices in the denier choir: Conservative columnists' dismissal of global warming and denigration of climate science, American Behavioral Scientist, 57, 754–776, 2013.

Flack, R., Cook, J., Ellerton, P., Kinkead, D., Coan, T., Boussalis, C., Nanko, M. O., Gallant, A., and Dargaville, R.: Identifying Flawed Reasoning in Contrarian Claims about Climate Change, https://osf.io/preprints/psyarxiv/tk76c (last access: 17 February 2026), 2024.

Geiger, N. and Swim, J. K.: Climate of silence: Pluralistic ignorance as a barrier to climate change discussion, Journal of Environmental Psychology, 47, 79–90, 2016.

Goldberg, M. H., van der Linden, S., Maibach, E., and Leiserowitz, A.: Discussing global warming leads to greater acceptance of climate science, Proceedings of the National Academy of Sciences, 116, 14804–14805, 2019.

Hansson, S. O.: Science denial as a form of pseudoscience, Studies in History and Philosophy of Science Part A, 63, 39–47, 2017.

Jolley, D. and Douglas, K. M.: The social consequences of conspiracism: Exposure to conspiracy theories decreases intentions to engage in politics and to reduce one's carbon footprint, British Journal of Psychology, 105, 35–56, 2014.

Lamb, W. F., Mattioli, G., Levi, S., Roberts, J. T., Capstick, S., Creutzig, F., Minx, J. C., Müller-Hansen, F., Culhane, T., and Steinberger, J. K.: Discourses of climate delay, Global Sustainability, 3, https://doi.org/10.1017/sus.2020.13, 2020.

Lewandowsky, S. and Cook, J.: The Conspiracy Theory Handbook, https://sks.to/conspiracy (last access: 17 February 2026), 2020.

Lewandowsky, S., Oreskes, N., Risbey, J. S., Newell, B. R., and Smithson, M.: Seepage: Climate change denial and its effect on the scientific community, Global Environmental Change, 33, 1–13, 2015.

Lewandowsky, S., Cook, J., Ecker, U. K. H., Albarracín, D., Amazeen, M. A., Kendeou, P., Lombardi, D., Newman, E. J., Pennycook, G., Porter, E. Rand, D. G., Rapp, D. N., Reifler, J., Roozenbeek, J., Schmid, P., Seifert, C. M., Sinatra, G. M., Swire-Thompson, B., van der Linden, S., Vraga, E. K., Wood, T. J., and Zaragoza, M. S.: The Debunking Handbook 2020, https://doi.org/10.17910/b7.1182, 2020.

Lieu, R., Hayes, O. R., and Cook, J.: Testing the impact of fallacies and contrarian claims in climate change misinformation, British Journal of Psychology, https://doi.org/10.1111/bjop.70049, 2025.

Maibach, E., Leiserowitz, A., Rosenthal, S., Roser-Renouf, C., and Cutler, M.: Is There a Climate “Spiral of Silence” in America: March, 2016, Yale Program on Climate Change Communication, New Haven, CT, https://hdl.handle.net/20.500.12592/5m992f (last access: 17 February 2026), 2016.

Maertens, R., Roozenbeek, J., Simons, J. S., Lewandowsky, S., Maturo, V., Goldberg, B., and van der Linden, S.: Psychological booster shots targeting memory increase long-term resistance against misinformation, Nature Communications, 16, 2062, https://doi.org/10.1038/s41467-025-57205-x, 2025.

McCright, A. M., Charters, M., Dentzman, K., and Dietz, T.: Examining the effectiveness of climate change frames in the face of a climate change denial counter-frame, Topics in Cognitive Science, 8, 76–97, 2016.

McCuin, J. L., Hayhoe, K., and Hayhoe, D.: Comparing the Effects of Traditional vs. Misconceptions-Based Instruction on Student Understanding of the Greenhouse Effect, Journal of Geoscience Education, 62, 445–459, 2014.

Metcalfe, J.: Chanting to the choir: the dialogical failure of antithetical climate change blogs, Journal of Science Communication, 19, https://doi.org/10.22323/2.19020204, 2020.

Modirrousta-Galian, A. and Higham, P. A.: Gamified inoculation interventions do not improve discrimination between true and fake news: Reanalyzing existing research with receiver operating characteristic analysis, Journal of Experimental Psychology: General, 152, 2411, https://doi.org/10.1037/xge0001395, 2023.

Pearce, W., Brown, B., Nerlich, B., and Koteyko, N.: Communicating climate change: Conduits, content, and consensus, Wiley Interdisciplinary Reviews: Climate Change, 6, 613–626, 2015.

Pennycook, G., Cannon, T. D., and Rand, D. G.: Prior exposure increases perceived accuracy of fake news, Journal of Experimental Psychology: General, 147, 1865, https://psycnet.apa.org/doi/10.1037/xge0000465 (last access: 17 February 2026), 2018.

Ranney, M. A. and Clark, D.: Climate Change Conceptual Change: Scientific Information Can Transform Attitudes, Topics in Cognitive Science, 8, 49–75, 2016.

Rowan, K. E., Engblom, A., Hathaway, J., Lloyd, R., Vorster, I., Anderson, E. Z., and Akerlof, K. L.: Overcome the deficit model by applying the CAUSE model to climate change communication, The Handbook of Strategic Communication, 225–261, https://doi.org/10.1002/9781118857205.ch16, 2021.

Schwarz, N., Newman, E., and Leach, W.: Making the truth stick and the myths fade: Lessons from cognitive psychology, Behavioral Science and Policy, 2, 85–95, 2016.

Seifert, C. M.; The continued influence of misinformation in memory: What makes a correction effective?, in: Psychology of learning and motivation, Academic Press, 41, 265–292, https://doi.org/10.1016/S0079-7421(02)80009-3, 2002.

Simchon, A., Zipori, T., Teitelbaum, L., Lewandowsky, S., and van der Linden, S.: A Signal Detection Theory Meta-Analysis of Psychological Inoculation Against Misinformation, Current Opinion in Psychology, 102194, https://doi.org/10.1016/j.copsyc.2025.102194, 2025.

Suldovsky, B.: The information deficit model and climate change communication, in: Oxford Research Encyclopedia of Climate Science, Oxford University Press, https://doi.org/10.1093/acrefore/9780190228620.013.301, 2017.

Swim, J. K., Fraser, J., and Geiger, N.: Teaching the choir to sing: Use of social science information to promote public discourse on climate change, Journal of Land Use and Environmental Law, 30, 91–117, 2014.

van der Linden, S.: The conspiracy-effect: Exposure to conspiracy theories (about global warming) decreases pro-social behavior and science acceptance, Personality and Individual Differences, 87, 171–173, 2015.

van der Linden, S., Leiserowitz, A., Rosenthal, S., and Maibach, E.: Inoculating the Public against Misinformation about Climate Change, Global Challenges, 1, 1600008, https://doi.org/10.1002/gch2.201600008, 2017.

Vraga, E. K., Kim, S. C., Cook, J., and Bode, L.: Testing the effectiveness of correction placement and type on Instagram, The International Journal of Press/Politics, 25, 632–652, 2020.

Winkler, B. and Cook, J.: The story of Skeptical Science: How citizen science helped to turn a website into a go-to resource for climate science, EGU, https://presentations.copernicus.org/EGU2020/EGU2020-562_presentation.pdf (last access: 17 February 2026), 2020.

Winkler, B. and Cook, J.: Using an interdisciplinary MOOC to teach climate science and science communication to a global classroom, EGU General Assembly 2021, online, 19–30 Apr 2021, EGU21-8576, https://doi.org/10.5194/egusphere-egu21-8576, 2021.

Zainurrahman, Z., Yusuf, F. N., and Sukyadi, D.: Text readability: its impact on reading comprehension and reading time, Journal of Education and Learning (EduLearn), 18, 1422–1432, 2024.

Zanartu, F., Cook, J., Frermann, L., and Otmakhova, Y.: Generative Debunking of Climate Misinformation, ClimateNLP 2024 Workshop, arXiv [preprint], https://doi.org/10.48550/arXiv.2407.05599, 2024.

Analysing the search phrases used to find us are beyond the scope of this study. However, we do speculate on the purpose of most readers in coming to Skeptical Science in the Results and Discussion section.

Cherry picking involves carefully selecting data that appear to confirm one position while ignoring other data that contradict that position, such as highlighting climate benefits while neglecting that overall, negative climate impacts outweigh the benefits (Cook, 2020).